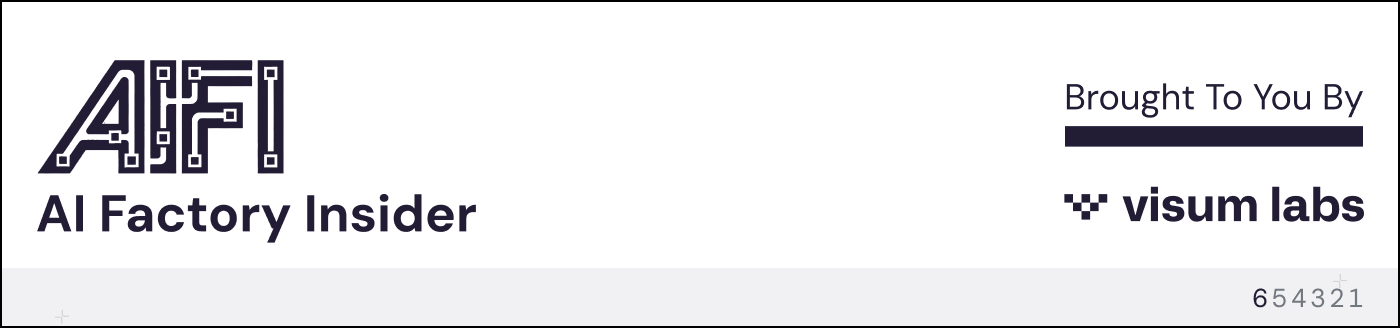

A 2024 Deloitte study projects US manufacturers will need 3.8 million new workers by 2033, and half those jobs may go unfilled.

The bigger problem knocking at the door is what happens when workers retire.

Nearly a quarter of the current manufacturing workforce is 55 or older, and when they leave, they take decades of pattern recognition, equipment intuition, and knowledge that never made it into any manual.

AI was supposed to solve this by capturing expertise and making it available to everyone on the floor, but most companies are spending their AI budgets on algorithms while ignoring the people who understand how things work.

👋🏻 I'm Leonardo Ubbiali. This week we're looking at the shift from automation to autonomy and why the companies getting it right are investing in people first.

97% of manufacturing firms say they're concerned about brain drain from retirements, and nearly a quarter of the current workforce is 55 or older.

When those workers leave, they take with them decades of pattern recognition, equipment intuition, and knowledge that never made it into any manual.

At a chemical plant in the Midwest last year, the predictive maintenance system showed nothing unusual on Reactor 7. Vibration data was within normal range, temperature was stable, and all sensors showed green.

A process engineer who had worked that reactor for 15 years heard something different.

There was a subtle pattern in the bearing assembly that she recognized.

She had heard it once before, back in 2019, about two weeks before a seal failure took the reactor offline for three days.

She also remembered that after that failure, maintenance had replaced the seal with a different spec because the original was backordered.

That detail existed nowhere in any system. Neither in the CMMS, nor in maintenance logs, nor in any database the AI could access.

The predictive model had learned from 18 months of sensor data, but it had no way of knowing that someone had swapped a part six years ago, or that the machine runs differently when humidity spikes, or that a particular sound means trouble even when the numbers look fine.

The engineer knew all of that because she had been there.

This is what Rockwell's CTO Cyril Perducat called "the shift from automation to autonomy" at Davos last week.

Automation handles repetitive tasks.

Autonomy means systems that self-organize and self-optimize.

But most companies are spending their AI budgets on algorithms while ignoring the people who actually understand how things work.

Remember Edition #1?

BCG research shows successful AI implementations allocate 70% of effort to people and processes, 20% to infrastructure, and only 10% to algorithms. Most manufacturers flip that ratio.

Autonomy built without institutional knowledge creates brittle systems that fail when they encounter something the model hasn't seen before.

Knowledge capture still gets treated as a nice-to-have with no line item, no deadline, and no owner. Every quarter another batch of veterans hits retirement age and nothing gets documented.

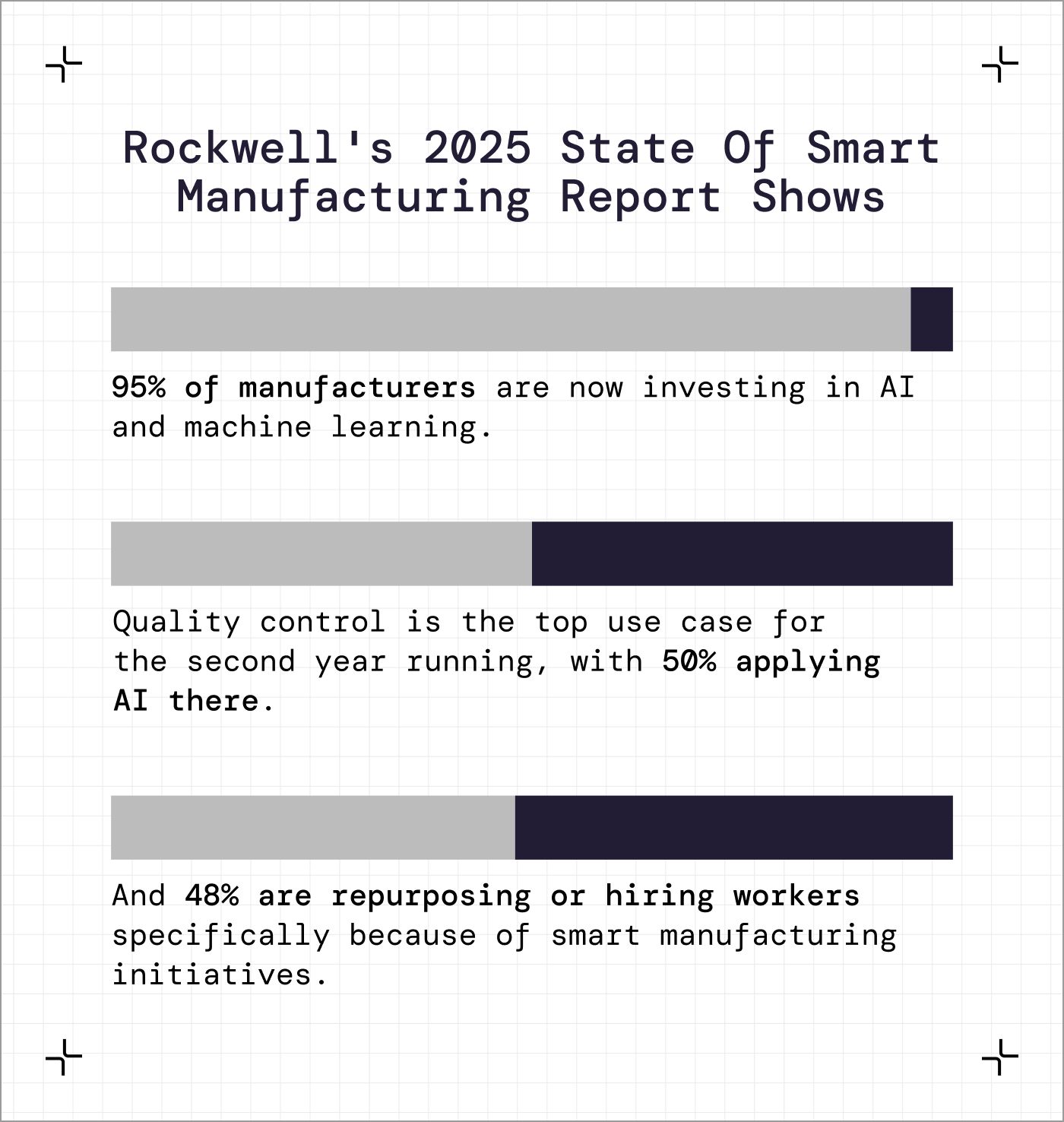

Five things you can do this quarter:

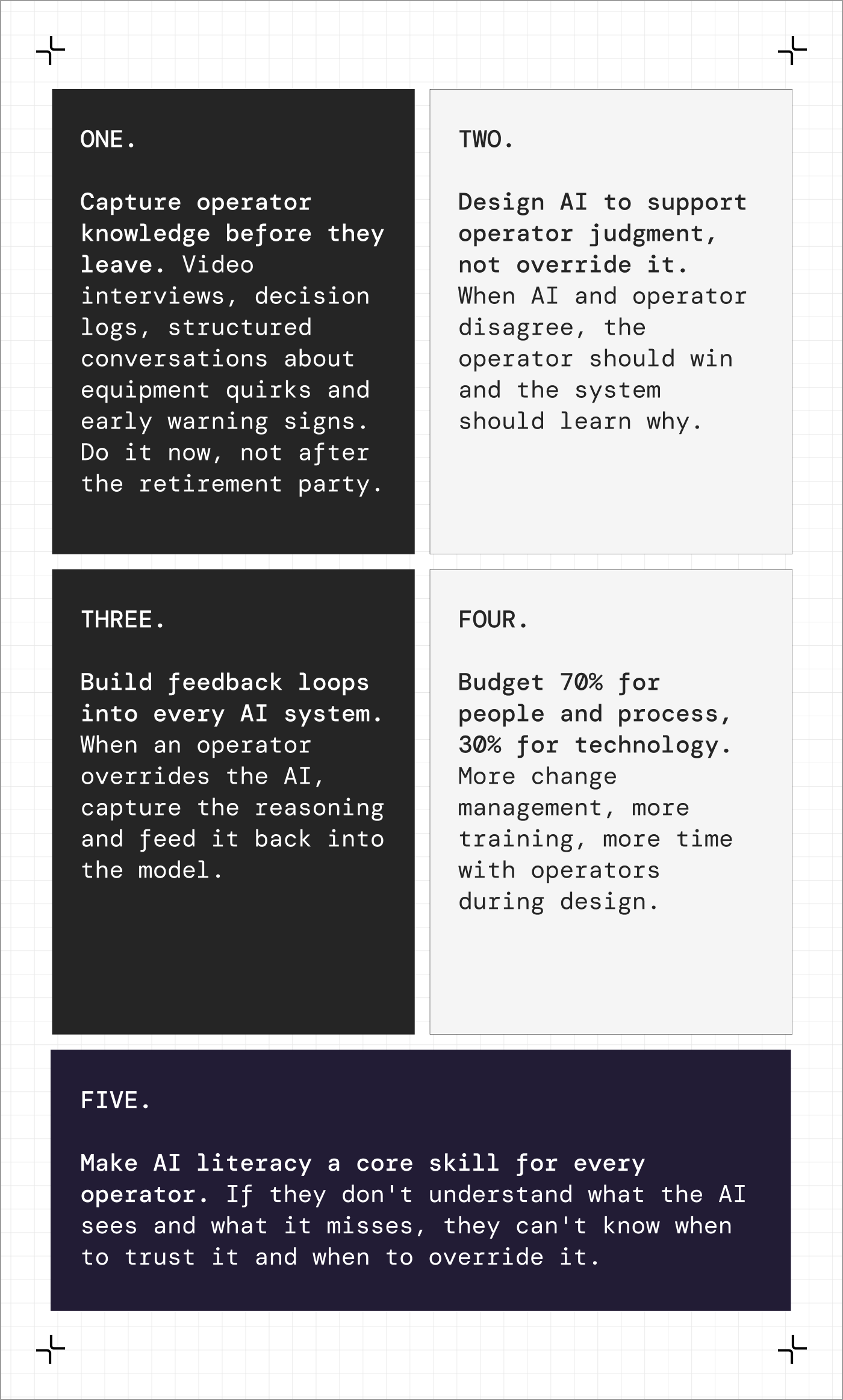

The problem: You have automated systems across your plant, but you don't know which ones are ready to operate more autonomously and which ones still depend on human judgment to catch what the sensors miss.

What you need:

List of your automated systems and processes (predictive maintenance, quality inspection, scheduling, etc.)

Recent examples of operator overrides or manual interventions

15 minutes

The prompt (copy this):

“I'm a [YOUR ROLE] at a [YOUR COMPANY/FACILITY TYPE]. We have several automated systems running, and I need to assess which ones could move toward more autonomy and which ones still require heavy human oversight.

Here are our current automated systems:

⦁ [List your automated systems]

Here are recent examples where people overrode the system or intervened manually:

⦁ [Describe 3-5 recent overrides: what the system recommended, who overrode it, why, and what happened]

Analyze this and tell me:

1. Which systems are operating reliably enough to handle more autonomous decision-making?

2. Which systems are being overridden frequently, and what patterns do you see in those overrides?

3. Where is human judgment still critical, and why might it be hard to automate that judgment?

4. What data or context are the automated systems missing that people are providing?

5. If I wanted to move one system toward greater autonomy, which one would you recommend starting with and what would need to change?

6. Be specific about what's working and what's not. I need to prioritize where to invest.

What you'll get back:

Fortune: "Let's train workers on industrial AI, not replace them"

Kriti Sharma makes the case that AI should accelerate skills training, not eliminate the need for it. She estimates manufacturing has 1-2 years to capture decades of institutional knowledge before it's lost to retirement.

Time to value: 8 minutes

What does your most experienced operator know that isn't captured in any system?

Hit reply. I read every email.

Leo