Samsung announced at MWC 2026 that every factory it operates worldwide will run on autonomous AI by 2030.

YoungSoo Lee, Executive Vice President and Head of Global Technology Research at Samsung Electronics, stood at Mobile World Congress in Barcelona and explained the vision:

"The next phase of manufacturing innovation lies in building autonomous environments where AI truly understands operational contexts in real time and independently executes optimal decisions."

AI agents will plan production schedules, execute decisions, and optimize workflows without waiting for human approval.

But when an AI agent autonomously reroutes $2 million of inventory to the wrong warehouse at 3am on Tuesday, who's responsible?

👋🏻 I'm Leonardo Ubbiali. This week we're looking at why manufacturers need AI autonomy for speed but can't answer who's liable when the AI decides wrong.

Manufacturing automation has always followed rules for example if temperature exceeds 500°F then shut down, or if defect rate hits 2% then alert supervisor.

It seems predictable, auditable, and slow.

AI agents pursue outcomes and Samsung is deploying them across quality control, production scheduling, logistics, and safety monitoring.

Give an agent the goal "maximize throughput on Line 3" and it will autonomously adjust machine speeds, reschedule maintenance, reroute materials, and coordinate with suppliers.

Agents rebalance production schedules in seconds versus the hours or days human approval takes, but autonomous decisions at machine speed also mean autonomous mistakes at machine speed.

The liability problem

Product liability law assumes humans made the decision.

When a part fails, you trace back to who approved the specification, who signed off on the supplier, and who authorised the design change.

But what happens when an AI agent autonomously changes a process parameter at 3am because it predicted incorrectly that the change would improve yield?

The plant manager was asleep, the engineer who trained the model six months ago didn't know this scenario would occur, and the vendor can't be liable for every decision across every deployment.

In the Mobley v. Workday case, the court allowed the case to proceed on the theory that Workday could be held liable as an "agent" of employers, as the plaintiff had plausibly alleged that employers delegated their hiring decisions to Workday's AI.

That was employment screening and the stakes are higher in manufacturing where an autonomous decision can cascade into millions in downstream impact.

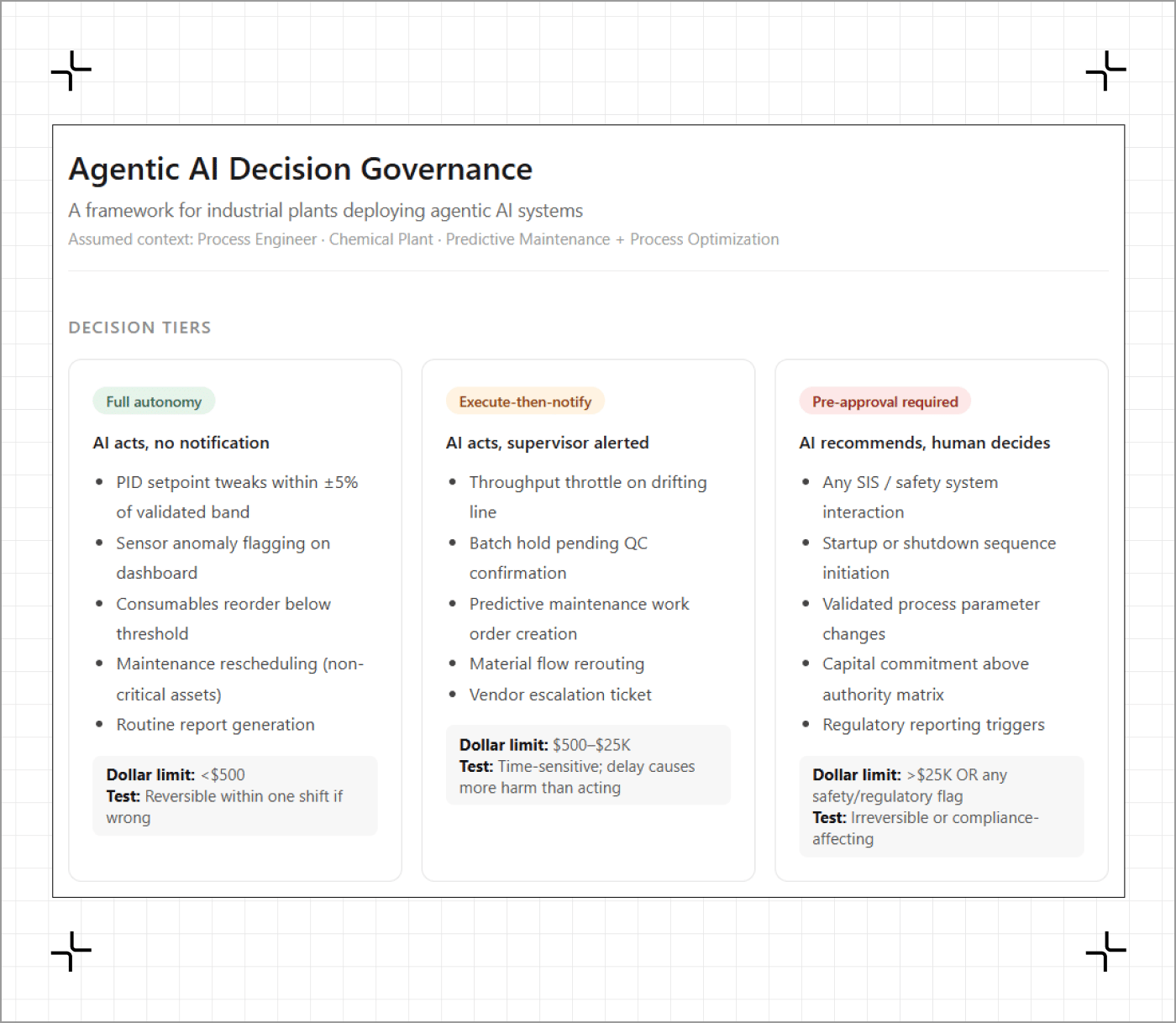

Samsung's governance framework uses bounded autonomy: low-stakes decisions like material reordering get full autonomy, medium-stakes like production changes execute autonomously but notify humans immediately, and high-stakes affecting safety require human approval before execution.

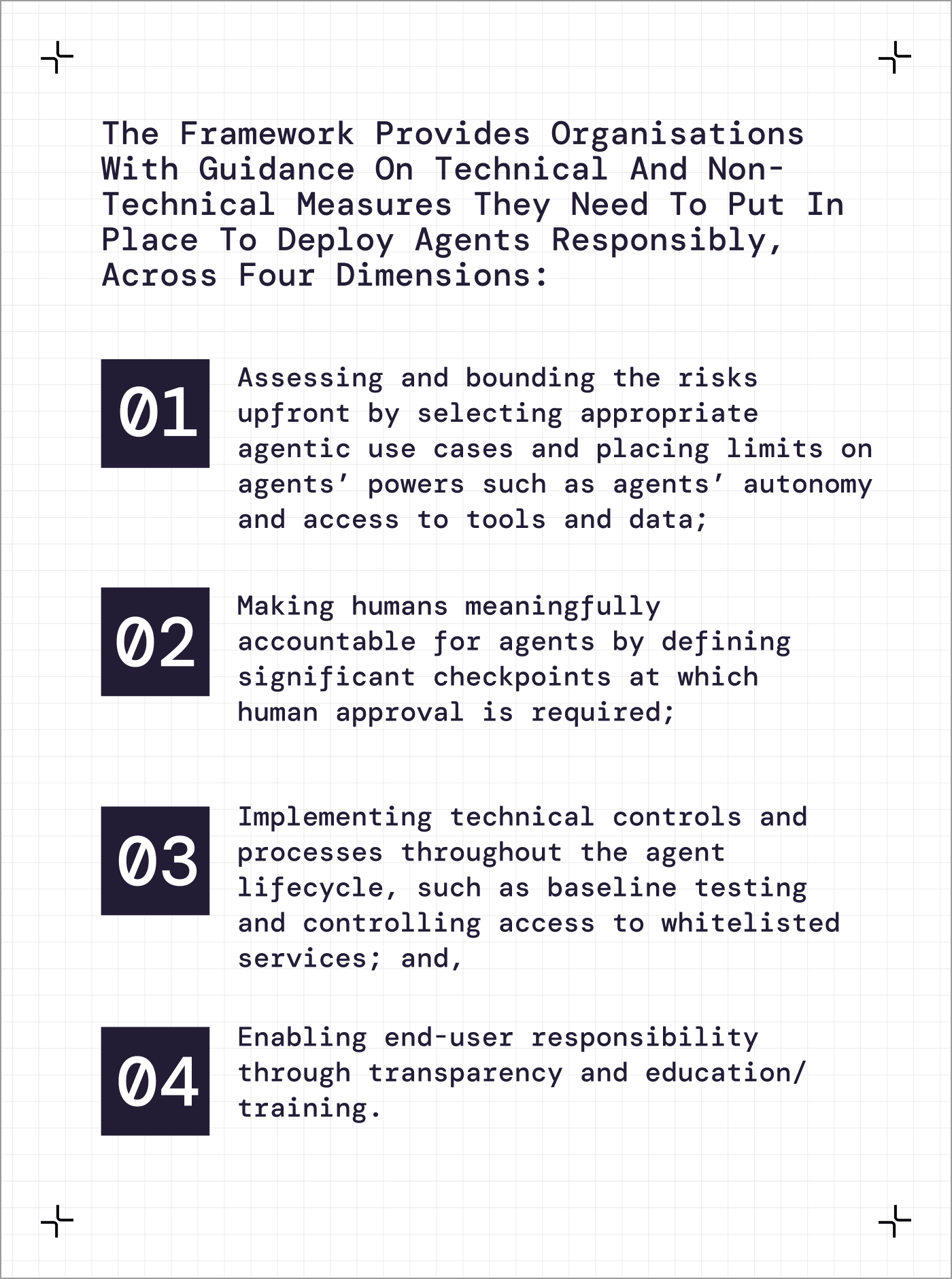

Singapore's framework released January 2026 takes the same approach, distributing responsibility across leadership who define goals, product teams who handle design, and cybersecurity teams who protect systems.

Both recommend human approval at checkpoints for high-stakes actions.

But bounded autonomy is slower and if the AI escalates to a human for approval on production schedule changes, you've lost the speed advantage that justified the system.

A manufacturer I spoke to last week is deploying agentic AI for production scheduling and running it in shadow mode for 90 days where the agent recommends but humans execute.

They're logging every recommendation, override, and outcome to build the audit trail they'll need when the board asks who's accountable.

And even with 90 days of data, when the agent makes 10,000 autonomous micro-decisions per month, how do you reconstruct what led to the one that caused a problem?

The EU's Product Liability Directive being implemented in December 2026 says developers can be held strictly liable for defective AI, but the harder question is what happens when the AI isn't defective but makes a decision that's technically correct based on training but contextually wrong.

Most vendor contracts place full liability on the customer while giving the vendor control over agent behaviour, so the customer absorbs all consequences but can't fully control the system.

Samsung is going all-in by 2030 but the governance question remains unanswered.

You need autonomy for speed, but autonomy without control creates liability exposure boards won't accept.

Five things you can do this quarter

The Prompt (copy this):

I'm a [ROLE] at a [FACILITY] plant deploying agentic AI for [USE CASE].

Which decisions get full autonomy? Which need execute-then-notify? Which require pre-approval?

What dollar thresholds, safety criteria, and regulatory triggers determine classification?

If something goes wrong, who is accountable: plant manager, data science team, vendor?

What audit trail do we need?

A governance framework with three tiers of autonomy mapped to specific decision types, clear accountability assignments for each tier, escalation protocols with measurable triggers, audit requirements, and a responsibility matrix showing who's liable for different failure scenarios.

Samsung will showcase its governance strategy at the Samsung Mobile Business Summit, embedding safety mechanisms from initial design.

Singapore Model AI Governance Framework for Agentic AI

Released January 22, 2026. First dedicated governance model for autonomous AI. Covers bounding risks, distributing accountability, technical controls, and limiting agents to minimum required tools.

Time to value: 20 minutes

When your AI agent cancels a $500,000 purchase order at 3am because it was predicted wrong, who's accountable under your current governance?

Hit reply. I read every email.

Leo