On March 11, 2023, Samsung's semiconductor division gave engineers access to ChatGPT.

Twenty days later, proprietary source code had been uploaded to OpenAI's servers three times.

The first incident: an engineer pasted semiconductor manufacturing code into ChatGPT to check for errors.

The second: an employee uploaded code used to identify equipment defects.

The third: a confidential internal meeting was recorded, transcribed, then fed to ChatGPT to generate minutes.

None of them were acting maliciously. They were just trying to do their jobs faster.

Before we get into what Samsung did next, quick question:

Does your plant have a policy governing which AI tools employees can use?

👋🏻 I'm Leonardo Ubbiali. This week we're looking at what Samsung's response to those leaks teaches manufacturers about why banning shadow AI makes the problem harder to manage, not smaller.

A process engineer uses ChatGPT to write a maintenance report faster, a quality manager is feeding inspection images into a free vision tool she found on her own.

And the ops lead bought an AI scheduling tool on his company card last month because IT's approval queue takes six weeks and the production problem couldn't wait.

Each of these feels harmless.

But together they create an informal AI infrastructure underneath the systems you can see, processing data you can't track, generating decisions that appear in no audit log.

For a manufacturing plant, the exposure goes beyond data security.

An AI scheduling tool bought without IT involvement sits outside your MES. When it makes a recommendation, nothing in your production system knows it happened.

The decision is invisible to every downstream process that depends on accurate scheduling data.

The ban didn't work

Samsung banned ChatGPT across all company-owned devices and networks, covering not just ChatGPT but Microsoft's Bing AI, and any competing service.

Within weeks, employees were using personal phones and home wifi to run the same tools, with no corporate visibility at all.

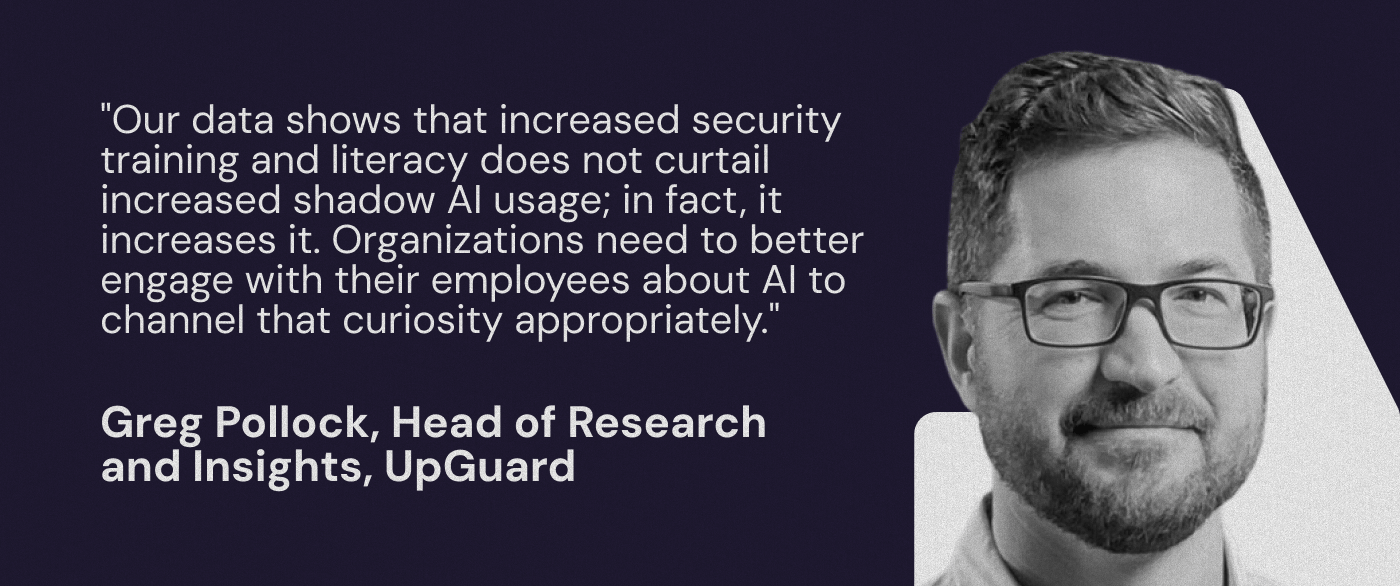

67% of AI usage across enterprises already happens through unmanaged personal accounts that bypass enterprise controls entirely.

Samsung's ban removed the tools IT could see, and pushed everything else to devices where IT had no visibility at all.

Seven months after the leak, Samsung unveiled Gauss, an internal LLM built on Samsung's own infrastructure where data stays inside the company's environment.

By 2025, they had launched Gauss 2.0 and developed their own agentic AI tools.

By May 2025, the ChatGPT restrictions were lifted, with security protocols limiting what data could be entered and access expanded primarily to departments working with AI rather than product development.

It took two years and a full internal AI development program to get back to roughly where they started, except this time governance was designed in from the beginning.

Each employee is currently making their own call about what data is safe to share, with which tool, on which device.

That call is being made right now, in departments you probably haven't surveyed.

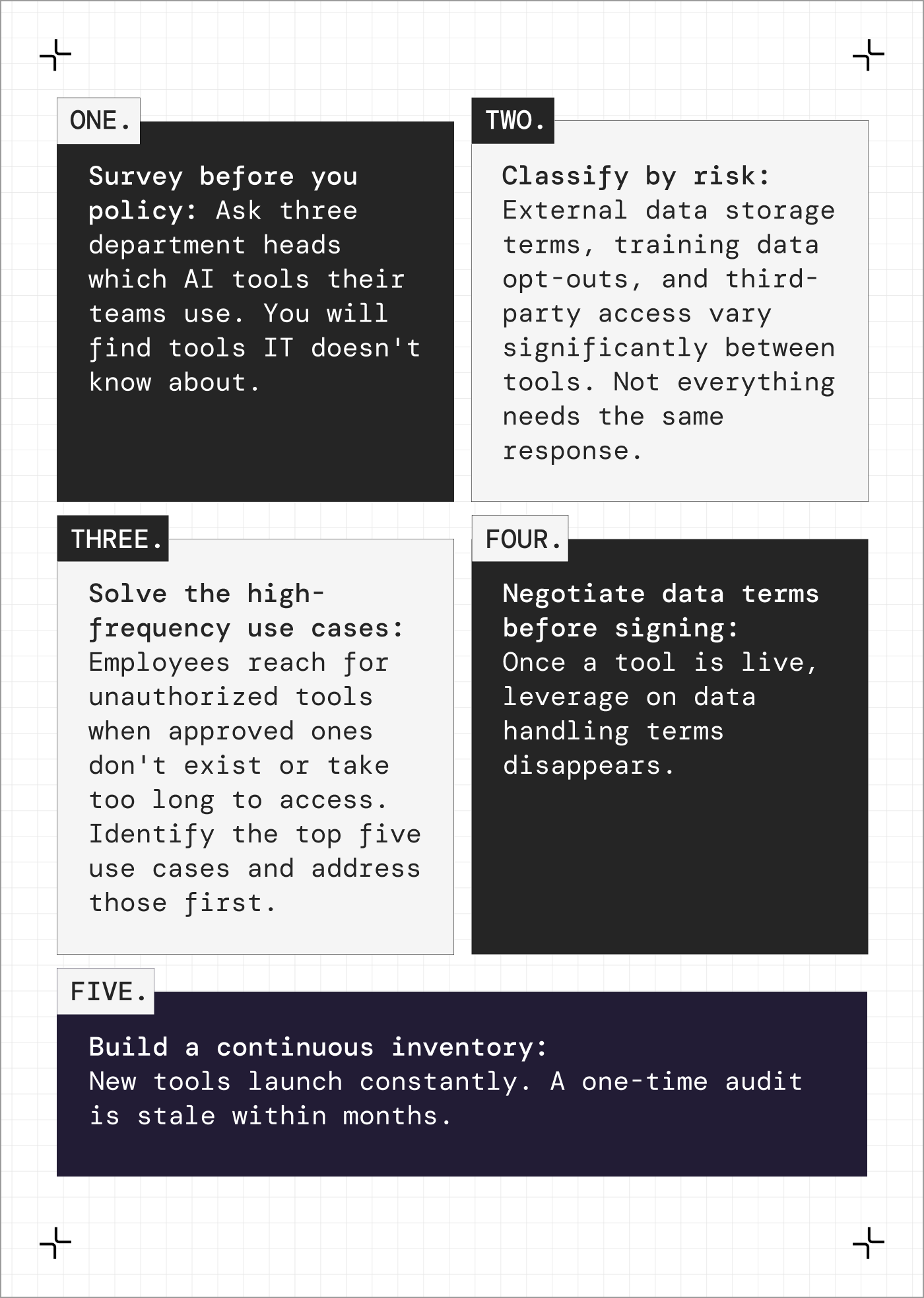

The manufacturers getting ahead of this run an informal audit first. Three department heads, ten questions, find out what's actually in use before writing a single rule.

Then they classify by risk.

A scheduling tool with no external data sharing is a different problem from a chatbot storing proprietary process inputs on a third-party server.

Then they solve for the high-frequency use cases, because employees use unauthorized tools when approved alternatives don't exist or take too long to reach.

Samsung's incident wasn't unusual. Most plants are running the same tools and haven't found out yet.

Five things you can do this quarter

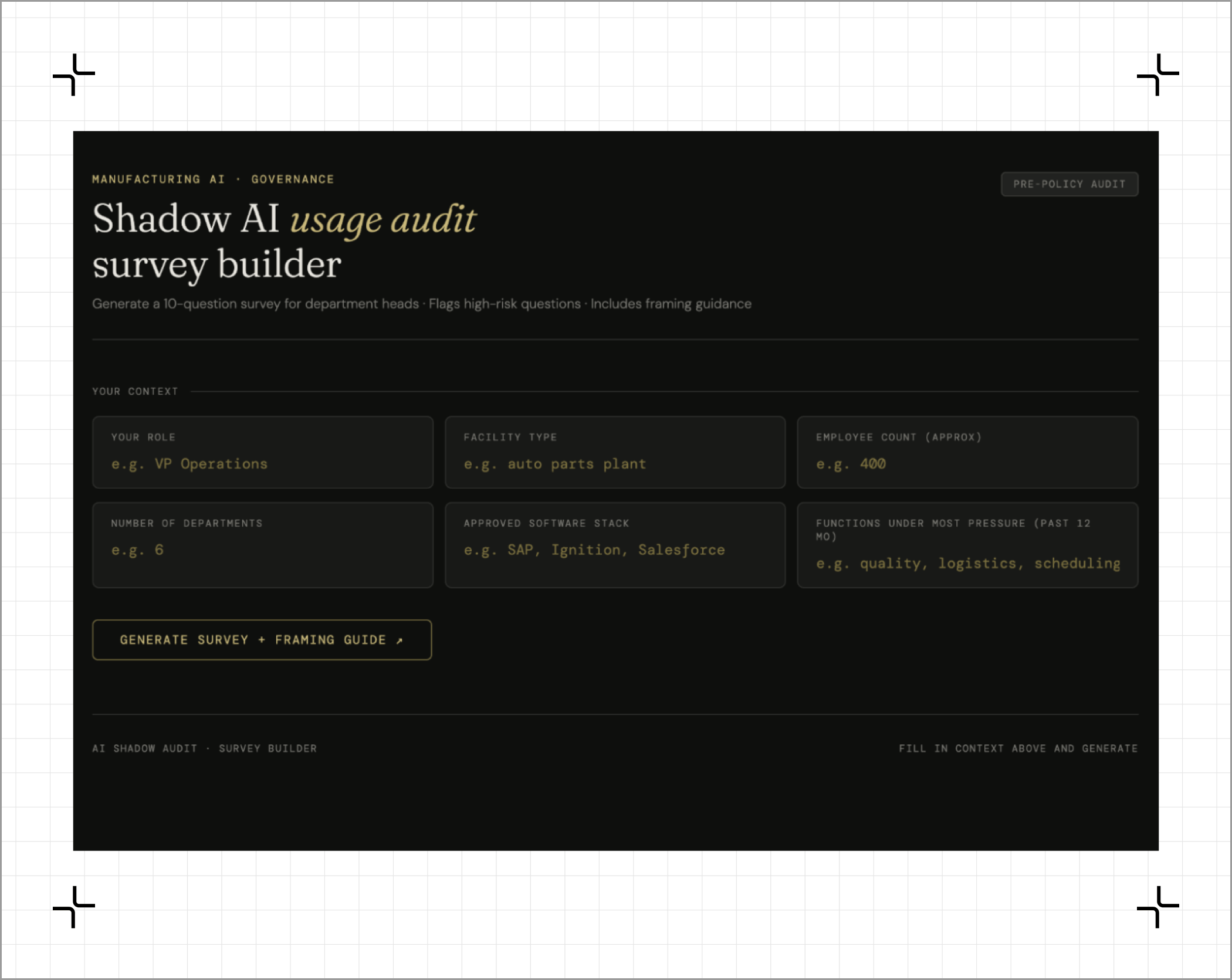

The problem: You suspect your plant is running AI tools that weren't officially approved. You need to understand the scope before deciding what to do.

What you need: Access to two or three department heads, a rough sense of which functions have faced the most pressure to move faster in the past 12 months.

The Prompt (copy this):

I'm a [YOUR ROLE] at a [FACILITY TYPE] manufacturing plant. I need to conduct an informal audit of AI tool usage across my operations before developing a formal governance policy.

Our plant has approximately [NUMBER] employees across [NUMBER] departments. Functions under most pressure in the past year: [LIST]. Current approved software stack: [ERP/MES/OTHER SYSTEMS].

Help me design a 10-question survey for department heads to understand:

Which AI tools are in use, approved or not

What data those tools are processing

How decisions from those tools are being acted on

Where the highest-risk exposure likely sits

Flag which questions will surface the most risk if answered honestly, and suggest how to frame the survey so people don't feel they're self-reporting a problem.

A survey department heads will actually complete, with a guide identifying which responses indicate the highest-risk shadow AI activity in your plant.

IBM Cost of a Data Breach Report 2025

The section on AI-related breaches is the clearest business case for governance available right now. The $670,000 shadow AI premium and 247-day detection lag are the two numbers that shift a CFO conversation from IT cost to risk management investment.

Time to value: 25 minutes

When your ops teams need AI to move faster, do they come to IT or do they find their own tools?

Hit reply. I read every email.

Leo