At JLR, AI systems helped spot machine problems weeks before they caused breakdowns. It also identified small defects that engineers missed during inspections.

This helped in cutting the production time by 18%.

On August 31, 2025, JLR's IT systems went down and production stopped across all three UK plants for five weeks.

The attack was enabled through stolen Jira credentials harvested via Infostealer malware, a known hallmark of HELLCAT's operations.

Suppliers didn't get paid, dealerships couldn't sell cars, and the UK government had to back a £1.5 billion loan to keep the supply chain alive.

The attackers goal was to bring production to a halt, and the AI integrations created the access points that allowed the breach to happen.

👋🏻 I'm Leonardo Ubbiali. This week we're looking at why connecting AI to factory floors turned cyberattacks from data theft into production shutdown, and what you can do about it.

But before we dive in:

Jeff Bezos is raising tens of billions of dollars for Project Prometheus, the AI startup he co-founded in November 2025, with the fundraising valuing the business at approximately $30 billion before new capital.

This marks Bezos' first operational role since stepping down as Amazon CEO in 2021, and the scale signals he sees manufacturing AI as worth his direct attention.

Bezos serves as co-CEO alongside Vik Bajaj, a physicist who worked with Google co-founder Sergey Brin at Google X on projects like Waymo and Wing drone delivery.

The company launched with $6.2 billion in funding and hired close to 100 researchers from OpenAI, DeepMind, and Meta.

Unlike most AI startups focused on text and images, Prometheus is building AI for physical systems, models that learn from interactions with the physical world, not just digital data.

The company is talking to JPMorgan CEO Jamie Dimon about investment through the bank's $10 billion Security and Resiliency Initiative, which aims to reinforce American supply chains.

Rather than building from scratch, Prometheus plans to buy positioned companies and apply AI to compress years of product development into months.

Bezos speaking at Italian Tech Week in 2025 said "In the next couple of decades, I believe there will be millions of people living in space, and we will be able to send robots to do work on the surface of the moon."

Project Prometheus appears to be part of that vision, building AI that can design and manufacture in extraterrestrial environments where human oversight is impossible.

JLR's production systems ran air-gapped for decades with no network connection and no way for attackers to reach equipment that wasn't connected to anything.

AI deployment changed that architecture because predictive maintenance needed sensor data flowing from equipment to cloud platforms and quality inspection required cameras feeding images to edge devices running computer vision models.

JLR had been rolling out these AI systems for two years and each connection made the factory smarter, but each connection also created paths from corporate IT to AI platforms to production networks that hadn't existed before.

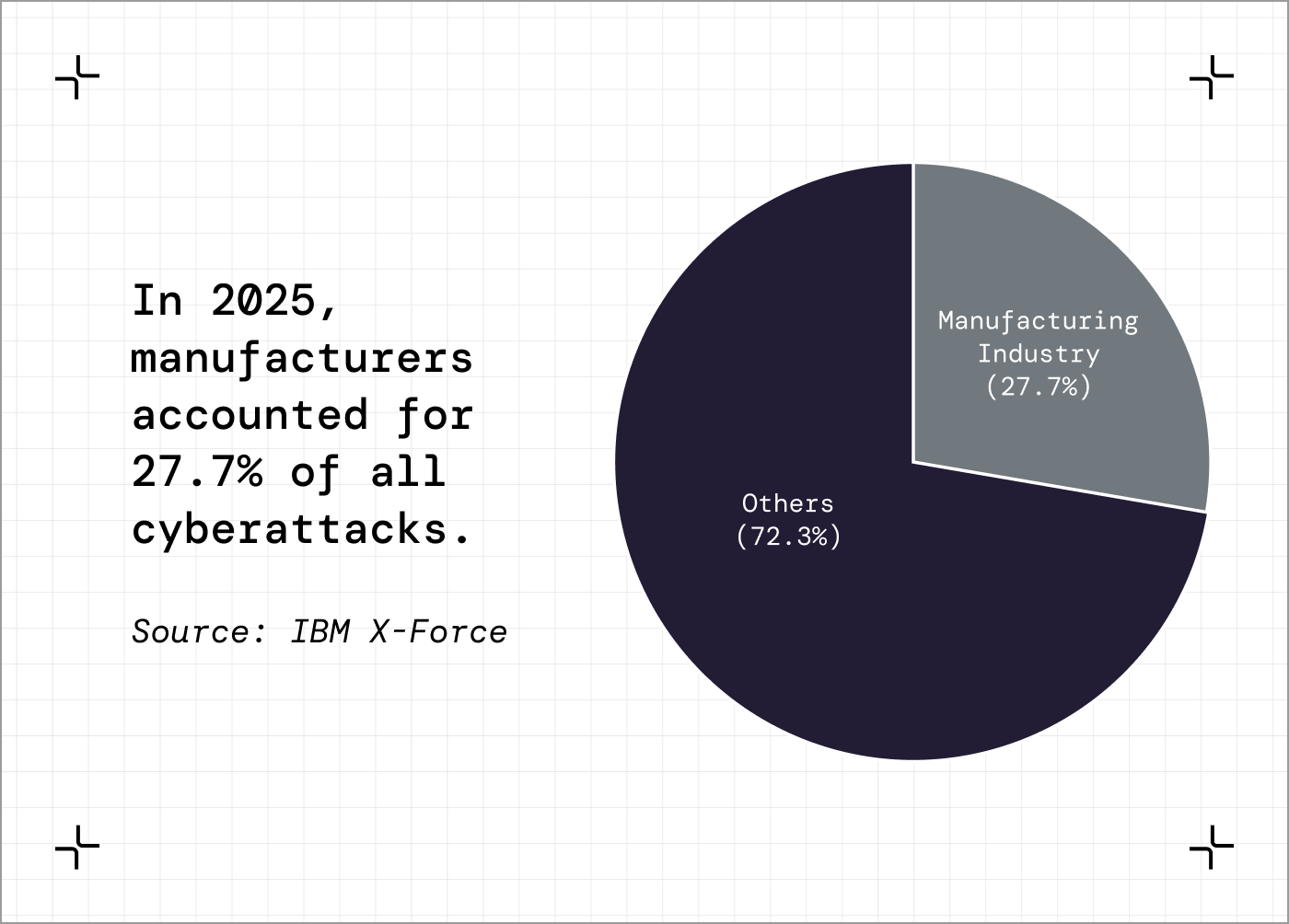

IBM's X-Force Threat Intelligence Index released February 25 shows manufacturing has been the most targeted industry for five consecutive years.

Attacks exploiting public-facing applications increased 44% year-over-year because AI tools help attackers find vulnerabilities faster than security teams can patch them.

At JLR, attackers got into corporate IT and moved laterally through the connections AI deployments created until they reached production networks.

They shut everything down on September 1 rather than risk attackers controlling the operational technology that ran the manufacturing lines.

The forensic investigation took five weeks because they had to verify every system before restarting.

If operational technology was compromised and they turned machines back on, attackers might still have control.

Then they extended the shutdown to October 1 after finding the attack had reached deeper than first assessed.

Production restarted in phases and hit normal capacity by mid-November, but suppliers operating on just-in-time manufacturing had been without revenue for over a month.

The £1.5 billion government loan wasn't primarily for JLR, it kept suppliers solvent because when JLR stopped production those suppliers had no alternative customers.

The shutdown cost £1.9 billion across the UK economy and affected over 5,000 organizations.

Ciaran Martin called it "the single most financially damaging cyber event ever to hit the UK."

The damage came from how quickly the attack moved after the AI systems were connected.

JLR didn't test their AI systems for security vulnerabilities before deployment.

A quality inspection model can be poisoned if an attacker compromises the training pipeline and injects bad examples so the model learns to pass defective parts.

A supplier I was talking to last week mentioned they're deploying predictive maintenance with production systems isolated from corporate IT and AI inference running on edge devices with no broader network access.

That's what JLR needed with zero-trust architecture for operational technology connections and secure AI pipelines that validate training data.

Instead, they connected legacy OT to AI platforms and assumed existing firewalls would handle it.

The five-week shutdown proved they wouldn't.

Five things you can do this quarter

The problem: You're deploying AI but don't know what an attacker could do if they compromised your systems.

What you need:

List of AI systems deployed or planned

Basic network architecture

15 minutes

The prompt (copy this):

I'm a [YOUR ROLE] at a [YOUR FACILITY TYPE] manufacturing plant. We're deploying AI for predictive maintenance, quality inspection, and production optimization. I need to understand our AI security exposure.

Current AI systems:

[List what's deployed]

Network setup:

Are production systems on the same network as corporate IT?

Which systems can be accessed from the internet?

Do AI platforms connect to external cloud services?

Analyze this and tell me:

Can someone poison our AI training data? How would we detect it?

Can adversarial inputs fool our computer vision systems?

Can prompt injection compromise our AI agents?

If an attacker compromised corporate IT, what production systems could they reach through AI connections?

What happens if AI systems go offline for a week?

Be specific about where the vulnerabilities are.

What you'll get back:

An assessment of which AI systems create attack vectors through poisoned training data, adversarial inputs, or prompt injection.

How an attacker could move from IT to production through AI connections.

IBM X-Force Threat Intelligence Index 2026

Released February 25, 2026. Documents why manufacturing has been the most targeted industry for five consecutive years, how AI is accelerating both attacks and defense, and why vulnerability exploitation has replaced phishing as the primary entry method.

Time to value: 25 minutes

How many of your AI systems were tested for attacks before they were connected to production?

Hit reply. I read every email.

Leo